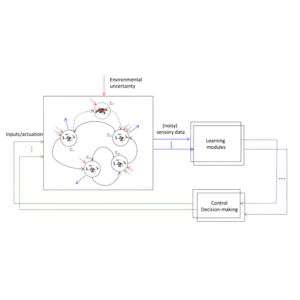

This research proposal aims to advance safety of data-driven (or learning-based) control, with applications to safe exploration of unknown environments (e.g., search and rescue with aerial robots). The project will investigate fundamental limits of safe learning-based control in terms of both sample complexity and computational complexity. Informed by these limits, we will develop algorithms at the intersection of machine learning, reinforcement learning and robust control to provide safety certificates that are less conservative and more computationally-efficient compared to the state-of-the-art methods.

People

Necmiye

Ozay

ECE, ROB

Engineering

Dimitra

Panagou

AERO, ROB

Engineering

Peter

Seiler

ECE

Engineering

Funding

Funding: $45K (2023)

Goal: Create a framework for learning based algorithms that allow autonomous robots to explore unknown environments with safety guarantees.

Token Investors: Necmiye Ozay, Dimitra Panagou, and Peter Seiler

Project ID: 1121